Cerebras Systems’ monster debut on Thursday not only put the company in one of the biggest IPOs in tech industry history, but was a clear signal of the unstoppable demand for AI-powered chips as tech giants scramble to replace expensive and sold-out Nvidia graphics processing units.

Cerebras ended its first day of trading on Wall Street with a market capitalization of just under $100 billion, putting it in line with the few companies that have closed above their market capitalizations, including Facebook parent Meta and Alibaba. The stock closed 10% lower in the first full day of trading on Friday.

Here’s what you need to know about this notable Nvidia competitor.

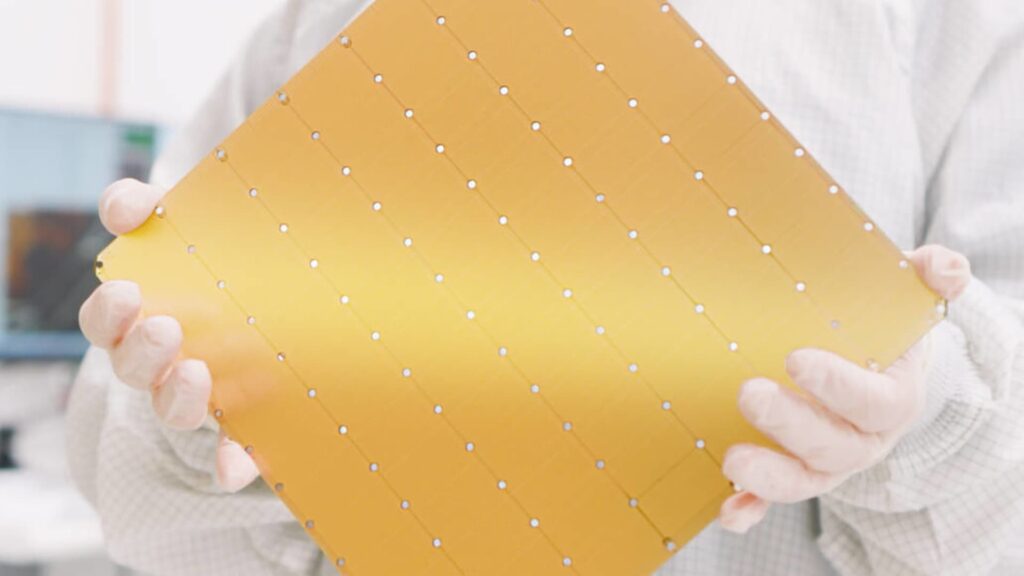

Cerebras makes a different type of chip than traditional Nvidia GPUs, and it’s about the size of a dinner plate.

“We make the largest chips in the semiconductor industry,” Cerebras CEO and co-founder Andrew Feldman told CNBC at Squawk Box on Thursday. “A larger chip processes more information in less time and provides results more quickly.”

Nvidia has historically won the AI chip race because its GPUs serve as general-purpose workhorses and excel at the parallel computations needed to train large models. But now we are entering the age of agent AI, where reasoning is key. Training teaches AI models to learn from patterns in large amounts of data, while inference uses AI to make decisions based on new information.

Inference can also be performed on less powerful chips programmed for more specific tasks, such as Cerebras’ WSE-3. This falls into a category of chips known as custom ASICs (application-specific integrated circuits). In-house ASICS are now manufactured by Google, Amazon, Meta, Microsoft, and others, making the field increasingly crowded.

According to Cerebras, WSE-3 is 57 times larger and has 50 times more transistors than the largest GPUs.

The cutting-edge AI chip is manufactured using Taiwan Semiconductor Manufacturing’s 2-nanometer process node, which is currently only possible in Taiwan. Cerebras’ chips are also manufactured by TSMC, but use TSMC’s less advanced 5-nanometer node.

Founded in Silicon Valley in 2016, Cerebras initially filed to go public in 2024, but withdrew the filing after facing scrutiny for its heavy reliance on a single customer, Microsoft-backed UAE AI company G42.

The company’s successful IPO on Thursday made Feldman and hardware technology chief Sean Lee, two co-founders, billionaires based on their stock holdings.

Cerebras 1 week stock price chart.

Cerebras has long sought to sell its chips to businesses, but now it primarily operates its chips as a cloud service within its own data centers, competing with cloud providers Google, Microsoft, Oracle and CoreWeave.

Cerebras and OpenAI announced a $20 billion cloud contract in January that expires in 2028, and Amazon Web Services announced in March that it was using Cerebras chips in its data centers.

“There’s so much demand for our high-speed inference products that actually supplying it is our biggest challenge. We’re adding as much manufacturing capacity and data center capacity as we can, but we’re still sold out in 2027,” Cerebras Chief Financial Officer Bob Cormin told CNBC on Thursday.

While hyperscalers manufacture their own ASICs, Cerebras competes more closely with companies that specialize in manufacturing ASICs for other companies. At the top of the list is Groq. In its largest acquisition to date, Nvidia paid $20 billion for Groq’s technology in December and later announced a custom Groq language processing unit at GTC in March.

SambaNova and D-Matrix are two other notable competitors of Cerebras that are looking to capitalize on the unprecedented demand for AI chips.

SambaNova counts Hugging Face and Meta among its customers for its SN50 chip; intel It participated in SambaNova’s $350 million funding round in February. Intel CEO Lip-Bu Tan has been the chairman of SambaNova since 2017.

Cerebras’ IPO also paves the way for other custom ASIC startups looking to go public, such as Rebellions.

The South Korean chipmaker raised $400 million (valued at $2.34 billion) from Samsung and others in March in preparation for its IPO.

See: Learn more about AI chips, from Nvidia GPUs to ASICs from Google and Amazon