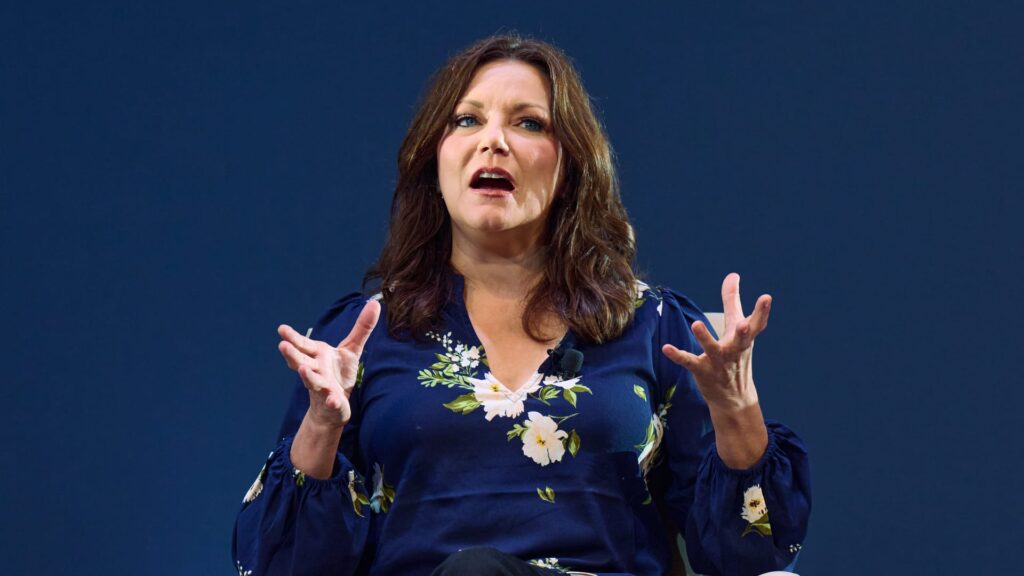

In May, country singer Martina McBride appeared at a Senate Judiciary Subcommittee hearing on Privacy, Technology, and the Law to speak out against AI-generated deepfakes. Last week, she expanded on her testimony at the CNBC AI Summit in Nashville.

McBride, along with Morna Willens, chief policy officer at the Recording Industry Association of America, spoke to CNBC’s Courtney Regan about the NO FAKES Act, a bipartisan bill focused on protecting an individual’s voice and likeness.

The top female vocalist, who won four Academy of Country Music Awards, said speaking out about deepfakes and the need for AI guardrails hits close to home. “The thing I’m most proud of in my career is my reputation and the fact that when I say something, my fans trust that it’s true,” McBride told an audience of technology executives, CFOs and CEOs.

The ability of AI to fake her voice and image means there is a real possibility that someone could take the lyrics of her songs, which highlight the horrors of domestic violence, and alter them to downplay or justify abuse. “At some point, you can’t tell what I’m saying and what someone is manipulating me with, and that’s scary,” she said.

Willens said he spends “100% of my time” on AI in his role at the RIAA because technology is advancing so quickly. “I’m in talks with artists, managers and members of Congress to figure out the next steps,” she said. “Is it regulation? I don’t know, but there needs to be some kind of guardrails around this technology.”

McBride told the Nashville audience that one of the biggest dangers of deepfakes is fraud. One of her fans nearly sold her house to raise money after the AI-generated Martina McBride claimed she needed cash. “AI will only make this type of fraud even more dangerous,” she says.

Willens said criticism that the music industry is somehow anti-AI or anti-technology is false. “The music industry has been at the cutting edge of technology for a while now,” she says. Labels and artists have been working with Apple Music and Spotify on licensing for years, so the idea that categorizing an artist’s library or work is too complex is simply not true, she explained.

The problem, Willens said, is a lack of transparency among the big AI companies. “I don’t know if they’re practicing Martina’s music, for example,” she says. “And if she doesn’t know what they’re training for, she can’t exercise her rights.”

McBride was asked about the impact of deepfakes on the careers and livelihoods of young singers just starting out. “If someone can violate the bond between artist and fan and distort the image young artists tell the world about themselves, careers can be lost before they can really take off,” she said.

Another aspect of the danger posed by AI-generated deepfakes is retaliation, McBride said.

“If you lose your house because an artist’s deepfake says you need money, and you don’t get that money back, that’s an infuriating situation,” she says. “I’m on stage in front of thousands of people, and I don’t know how long it will be before I don’t feel safe doing that anymore. This is a real physical risk that we need to consider.”