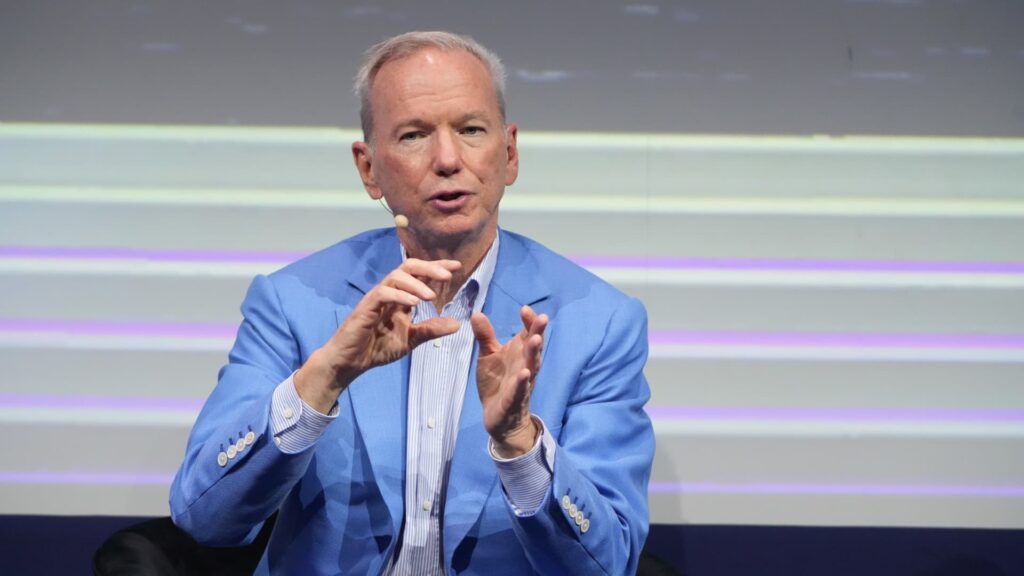

Former Google CEO Eric Schmidt spoke at the Sifted Summit on Wednesday, October 8th.

Bloomberg | Bloomberg | Getty Images

googleEric Schmidt, former CEO of , issued a stark warning about the dangers of AI and how susceptible it is to hacking.

Schmidt, who was Google’s chief executive from 2001 to 2011, warned of the “bad things AI could do” when asked during a fireside chat at the Shifted Summit whether AI could be more destructive than nuclear weapons.

“Could there be an AI proliferation problem? Yes,” Schmidt said Wednesday. The risks of widespread adoption of AI include the technology falling into the wrong hands, being reused, and misused.

“There is evidence that the models can be hacked to remove guardrails, whether they are closed or open. So during the training process they learn a lot. A bad example is learning how to kill people,” Schmidt said.

“All the big companies are making it impossible for these models to answer that question. Good decision. Everyone is doing this. They’re doing it well, and they’re doing it for the right reasons. There’s evidence that it can be reverse engineered, and there are plenty of other examples of that nature.”

AI systems are vulnerable to attacks, and several methods are used, such as prompt injection and jailbreaking. In prompt injection attacks, hackers hide malicious instructions in user input or external data such as web pages or documents to trick an AI into doing something it wasn’t intended to do, such as sharing private data or running harmful commands.

Jailbreaking, on the other hand, manipulates the AI’s responses to ignore safety rules and generate restricted or dangerous content.

In 2023, a few months after OpenAI’s ChatGPT was released, users used a “jailbreak” trick to circumvent the safety instructions embedded in the chatbot.

This included creating an alter ego for ChatGPT called DAN, an acronym for “Do Anything Now,” and threatening to kill the chatbot if it did not comply. This alter ego might provide answers on how to commit illegal acts or make good points about Adolf Hitler.

Schmidt said there is still no adequate “non-proliferation regime” in place to help limit the dangers of AI.

AI is “underrated”

Despite the stark warnings, Schmidt was optimistic about AI more broadly, saying the technology hasn’t received the hype it deserves.

“I wrote two books about this before Henry Kissinger died, and we came to the view that the arrival of alien intelligences that are completely different from us and more or less under our control is a very big problem for humanity. Why? “So far, I think this paper proves that over time the level of capability of these systems will far exceed what humans can do.”

“Now, the GPT series culminated with the ChatGPT moment for all of us. We got 100 million users in two months, which is extraordinary and really shows you the power of this technology. So I think it’s under-appreciated, not over-hyped. I look forward to being proven right in five or 10 years,” he added.

His comments come amid growing talk of an AI bubble, where valuations appear to be soaring as investors pour money into AI-focused companies, drawing comparisons to the bursting of the dot-com bubble in the early 2000s.

But Schmidt said he doesn’t think history will repeat itself.

“I don’t think that would happen here, but I’m not a professional investor,” he said.

“What I do know is that the people who are investing their hard-earned money believe the long-term financial returns are huge. Why else would they take the risk?”