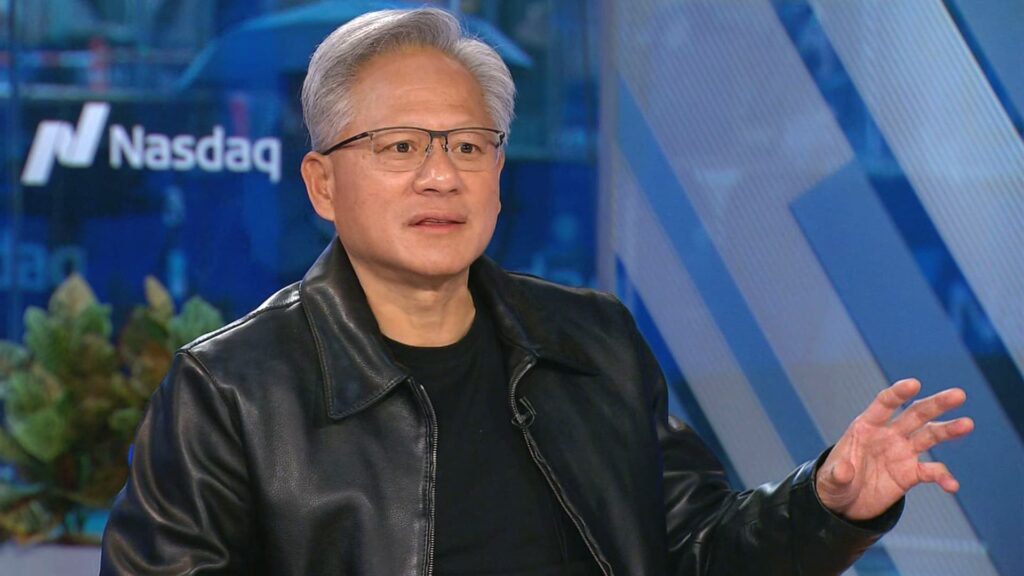

nvidia CEO Jensen Huang said Wednesday that artificial intelligence models are in greater demand this year as they further develop from answering simple questions to complex inference.

“Computing demand has increased significantly this year, especially over the past six months,” Huang said on CNBC’s “Squawk Box.”

The AI chip leader’s CEO was answering questions about what investors want most from him. Nvidia stock rose about 2% on Wednesday, helping push the Nasdaq composite higher.

AI inference models use an exponential amount of computing power, and because the results are so good, we’re also seeing demand for an exponential amount, Huang said.

“AIS is smart enough that everyone wants to use it,” the CEO said. “Right now, two indices are occurring simultaneously.”

“The demand for Blackwell is really, really high,” he said of Nvidia’s most advanced graphics processing unit. “I think we are at the beginning of a new industrial revolution, the beginning of a new buildout.”

Nvidia announced last month that it would invest $100 billion in a massive data center buildout for Openai. Openai plans to build a 10 gigawatt data center using Nvidia chips.

The scale of the AI industry’s plans raises questions about whether major companies will have the power they need to further their ambitions. 10 gigawatts is equivalent to the annual power consumption of 8 million U.S. households, or the peak baseline summer demand of New York City in 2024.

When asked who is winning the AI race, Huang said the United States is “not that far ahead” of China right now. Beijing is building the power needed to support AI much faster than the US, the CEO said.

“China is far ahead in energy,” Huang said.

The artificial intelligence industry needs to build new power generation from the electric grid to quickly move to meet demand and insulate consumers from rising power prices, he said. The data center will need to be equipped with natural gas and potentially nuclear power at some point in the future, the CEO said.

“We should invest in almost every possible way to generate energy,” Huang said. “Self-generating power in data centers can move much faster than putting it on the grid, and we have to do that,” he said.